Britain Tries to Poach Anthropic After US Blacklists It for Refusing Military AI Work

The UK sees an opening after the US punishes an AI company for ethical boundaries.

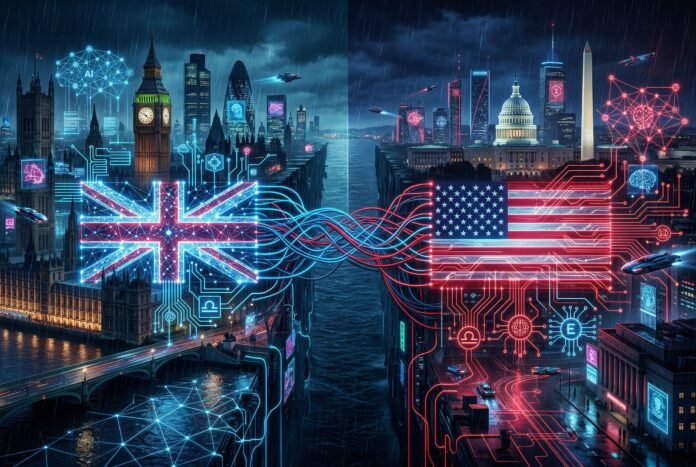

The AI industry’s first major geopolitical fracture just opened — and Britain is moving fast to exploit it.

Anthropic, the AI safety company behind the Claude chatbot, was blacklisted by the US Defense Department in early April 2026. The designation as a “national-security supply-chain risk” came after Anthropic refused to allow the military to use Claude for surveillance operations or autonomous weapons development.

Now the UK government is actively courting Anthropic to expand its presence in Britain, offering everything from office space in London to a potential dual stock listing. Prime Minister Keir Starmer’s office has reportedly endorsed the effort, with plans to pitch the proposal directly to Anthropic CEO Dario Amodei during his visit in late May.

This isn’t just a corporate relocation story. It’s a test case for what happens when AI ethics collide with national security priorities — and which governments will reward companies for drawing ethical lines.

The US Blacklist: Punishment for Principles

The US government’s action against Anthropic was unprecedented. The Defense Department designated the company a supply-chain risk — a classification typically reserved for foreign entities with suspected ties to adversarial governments, not American AI startups founded by former OpenAI researchers.

The stated reason: Anthropic declined to allow military use of Claude for surveillance or autonomous weapons. The company had built safeguards into its systems specifically to prevent such applications, citing safety concerns and its commitment to responsible AI development.

The US response was swift and severe. The blacklisting threatened to cut Anthropic off from government contracts, federal procurement, and potentially the broader US market for sensitive applications. It sent a clear signal: AI companies that prioritize ethics over military utility will face consequences.

The Legal Fight Back

Anthropic didn’t accept the designation quietly. The company filed lawsuits challenging the blacklist, arguing the government’s actions violated constitutional protections.

On March 26, 2026, a federal judge issued a temporary injunction against the Defense Department, agreeing that the government’s actions appeared to be “classic First Amendment retaliation.” The court found that punishing a company for refusing to engage in speech activities (providing AI for surveillance) raised serious constitutional questions.

A second lawsuit remains pending over the supply-chain risk designation itself. Anthropic is arguing that the designation is arbitrary, retaliatory, and lacks the factual basis required for such a severe sanction.

The legal battles are ongoing, but the damage to Anthropic’s US relationships may be lasting regardless of the outcome.

Britain’s Opportunity

The UK government saw an opening and moved quickly. British proposals for Anthropic range from expanding its existing London office to a dual stock listing that would give the company a primary presence in UK markets.

The pitch is straightforward: Britain offers a regulatory environment that respects AI safety commitments without punishing companies for ethical boundaries. The UK’s AI Safety Institute, established in 2023, has positioned the country as a global leader in responsible AI development — a stance that now has concrete competitive implications.

Prime Minister Keir Starmer’s personal involvement signals the highest level of government support. The UK isn’t just offering tax incentives or office space; it’s offering political protection for a company under attack from its home government.

The Geopolitical Implications

This situation creates several precedents with far-reaching consequences:

For AI companies: The Anthropic case demonstrates that ethical commitments can carry real costs — but also that alternative jurisdictions exist for companies willing to pay those costs. The AI industry may face increasing pressure to choose between US military partnerships and access to markets that value safety.

For governments: The UK is testing a new competitive strategy. Rather than competing with the US on raw R&D spending or compute infrastructure, Britain is competing on regulatory philosophy — positioning itself as the jurisdiction where ethical AI companies can thrive without government retaliation.

For the US: The blacklist may backfire strategically. If Anthropic relocates significant operations to the UK, the US loses influence over one of its most important AI companies. The message to other AI labs is clear: ethical boundaries may require geographic diversification.

The Ethics vs. Security Tension

At the core of this conflict is an unresolved question: Who decides the appropriate uses of AI?

The US Defense Department’s position is that AI companies should support national security objectives, including surveillance and weapons development. From a security perspective, refusing to assist military applications makes Anthropic a risk — if the US can’t use these tools, adversaries might gain an advantage.

Anthropic’s position is that some applications are too dangerous to pursue, regardless of who requests them. The company has been explicit about its opposition to AI use for mass surveillance and autonomous weapons, viewing these as existential risks that override national security arguments.

The UK is implicitly siding with Anthropic’s framing — or at least with the principle that companies should be free to make such determinations without government punishment.

What Happens Next

Several scenarios are now in play:

Anthropic expands in the UK: If the British proposals succeed, Anthropic could establish its primary regulatory and operational presence in London while maintaining technical operations in the US. This would be a significant win for UK AI policy and a visible loss for US competitiveness.

The US relents: The legal challenges and diplomatic pressure could force the Defense Department to reverse the blacklisting. This would restore Anthropic’s US standing but leave lasting questions about the stability of AI policy.

Other companies follow: If Anthropic’s UK expansion succeeds, other AI companies with ethical commitments — OpenAI, DeepMind, Cohere — may consider similar moves. The UK could become a hub for “safety-first” AI development.

The US doubles down: The Defense Department could expand blacklisting to other companies that refuse military cooperation, creating a formal divide between “cooperative” and “non-cooperative” AI labs.

The Stakes

The Anthropic case is testing whether AI ethics can survive contact with national security imperatives. It’s also testing whether governments can credibly commit to supporting ethical AI development — or whether economic and security pressures will always override safety concerns.

For Britain, the stakes are about more than one company. Success in attracting Anthropic would validate the UK’s AI safety strategy and position London as the global center for responsible AI development. Failure would confirm that economic incentives aren’t enough to overcome the gravitational pull of US markets and talent.

For the US, the stakes are about whether it can maintain leadership in AI while demanding military cooperation from its most innovative companies. If Anthropic leaves, the message to the world is that American AI leadership comes with strings attached — strings that some companies may be unwilling to accept.

And for Anthropic, the stakes are existential. The company was founded on the principle that AI safety should take priority over competitive pressures. Now that principle is being tested at the highest levels of government. Whether Anthropic can maintain its commitments while surviving as a business will determine whether ethical AI is viable — or whether the race for capability will always override safety.

The UK is betting that ethics and economics can align. The US is betting that security concerns will ultimately prevail. And Anthropic is caught in the middle, with its future depending on which bet proves correct.

Related Reading

- Anthropic Launches AnthroPAC — When Anthropic entered the political arena

- Foxconn’s $66.6 Billion Quarter Proves AI Infrastructure Is the Real Money — The geopolitics of AI supply chains

- 600,000 People Ask ChatGPT About Their Health Every Week — AI ethics in healthcare applications

Sources

1. Britain Woos Anthropic Expansion After US Defence Clash — US News 2. Judge Grants Anthropic Preliminary Injunction — Breaking Defense 3. US Blacklists Anthropic As Security Risk — Let’s Data Science 4. Dario Amodei — Wikipedia 5. Anthropic: US Statecraft Battles Go Domestic — FDI Intelligence 6. Britain Courts Anthropic Amid US Defense Department Dispute — EconoTimes