From ELIZA to ChatGPT: Understanding how we got here and where we are going.

Table of contents

- The Hierarchy That Explains Everything

- The Foundation: Machine Learning

- The Neural Revolution: Deep Learning

- The Paradigm Shift: Foundation Models

- Large Language Models: The Star of the Show

- Beyond Language: The Multi-Modal Future

- Generative AI: The Creative Layer

- The Trade-Offs: Power and Problems

- The Strategic Implications

- Looking Forward

- Related Reading

- Sources

In the mid-1960s, a computer program called ELIZA surprised users by mimicking human conversation. It was a parlor trick by today’s standards—pattern matching and scripted responses—but it represented something profound: the first glimpse of machines that could seem to think.

Sixty years later, ChatGPT and modern AI systems process billions of words, write code, and answer questions with nuanced understanding. The journey from ELIZA to GPT-4 is not just a story of bigger computers. It is a fundamental restructuring of how we build intelligent systems.

Understanding this evolution is not academic curiosity. For businesses, investors, and builders, the shift from traditional AI to foundation models represents the biggest change in how value gets created from data since the internet itself.

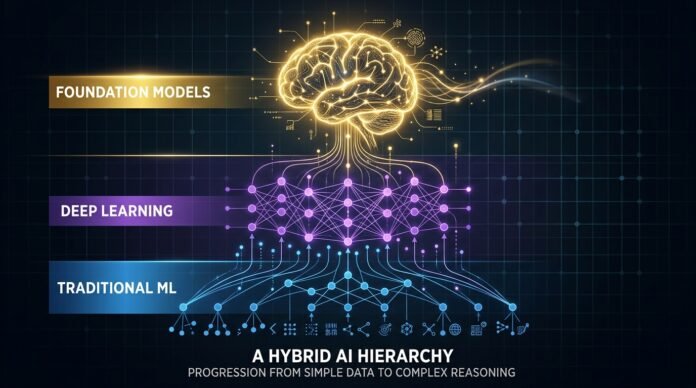

The Hierarchy That Explains Everything

If you are confused by AI terminology, you are not alone. Machine learning, deep learning, foundation models, large language models, generative AI—these terms get thrown around interchangeably. But they describe a clear hierarchy, and understanding that hierarchy is essential for making smart decisions about AI investment and implementation.

Here is the structure:

Artificial Intelligence (broadest field)

└── Machine Learning (algorithms that learn from data)

└── Deep Learning (multi-layer neural networks)

└── Foundation Models (massive scale, pre-trained)

└── Large Language Models (language-specific)Each layer narrows the focus while increasing capability. Each layer builds on what came before. And critically, each layer represents a different cost structure, risk profile, and strategic value.

The Foundation: Machine Learning

Machine learning emerged as a distinct approach in the 1980s and 90s. Rather than programming computers with explicit rules—”if X happens, do Y”—researchers developed algorithms that could learn patterns from data.

The breakthrough was statistical. Show a machine learning algorithm thousands of examples of spam emails, and it learns to identify patterns that distinguish spam from legitimate messages. The algorithm does not need to understand what “Nigerian prince” means. It learns that emails containing those words, combined with certain formatting patterns, correlate with user behavior.

Machine learning has three main flavors:

Supervised learning trains on labeled data. You feed the algorithm inputs paired with correct outputs. Show it thousands of house descriptions with their sale prices, and it learns to predict prices for new houses.

Unsupervised learning finds patterns without labels. Feed it customer purchase data, and it might discover that certain products cluster together—people who buy A often buy B—without being told what to look for.

Reinforcement learning learns through trial and error in an environment. The algorithm takes actions, receives rewards or penalties, and adjusts its strategy accordingly. This powers game-playing AIs and robotics.

Traditional machine learning still powers much of the world’s AI infrastructure. Credit scoring, recommendation engines, fraud detection—these often use techniques like linear regression, decision trees, and support vector machines that predate the deep learning revolution.

The key insight: not every problem needs neural networks. Sometimes a simple statistical model, properly tuned, outperforms complex approaches.

The Neural Revolution: Deep Learning

Deep learning represents a subset of machine learning focused on artificial neural networks with multiple layers—hence “deep.”

Neural networks are not new. The concept dates to the 1940s. But they required computational power and data volumes that did not exist until the 2010s. When those resources became available, deep learning transformed multiple fields simultaneously.

The architecture is loosely inspired by biological brains. Nodes (artificial neurons) connect in layers. Input flows through hidden layers to output. Each connection has a weight that adjusts during training. With enough layers and parameters—modern systems have billions—these networks can learn incredibly complex patterns.

Deep learning excels at unstructured data: images, audio, natural language. Traditional machine learning struggles here because human-engineered features break down. How do you define “cat-ness” in pixel values? Deep learning does not require explicit definitions. It learns hierarchical representations directly from data.

Early layers might detect edges. Middle layers combine edges into shapes. Deeper layers assemble shapes into objects. The network discovers these representations through training, not human specification.

This capability enabled the AI breakthroughs of the 2010s: ImageNet accuracy surpassing humans, speech recognition becoming practical, machine translation improving dramatically.

But deep learning had limitations. Each application required training a new network from scratch. A system trained to recognize cats could not translate French. The knowledge did not transfer.

The Paradigm Shift: Foundation Models

In 2021, researchers at Stanford’s Institute for Human-Centered Artificial Intelligence coined a new term: foundation models. They observed that AI was converging on a new paradigm, and they wanted to name it.

The concept is simple but transformative. Instead of training separate models for each task, train one massive model on vast amounts of data, then adapt it to specific applications.

The training process is generative. Feed the model terabytes of text, and train it to predict the next word in sentences. “No use crying over spilled…” → predict “milk.” This seemingly simple task, at massive scale, forces the model to learn grammar, facts, reasoning patterns, and even something approaching common sense.

The result is a foundation—a base model containing broad knowledge and capabilities that can be adapted to countless specific tasks.

This changes the economics of AI development. Previously, building an AI system meant collecting task-specific data, training a model, and deploying it. Each application required this full pipeline. Now you can take a pre-trained foundation model and fine-tune it with relatively small amounts of task-specific data.

The cost reduction is dramatic. Training GPT-4 reportedly cost over $100 million. Fine-tuning it for a specific application might cost thousands. This democratizes access to capabilities that were previously available only to the largest tech companies.

Large Language Models: The Star of the Show

Large language models (LLMs) are the most visible type of foundation model. GPT-4, Claude, Llama—these are LLMs.

The name breaks down precisely:

Large refers to scale. These models have billions or trillions of parameters. GPT-4 reportedly has over 1 trillion parameters across its architecture. This scale enables nuanced understanding and sophisticated generation.

Language specifies the domain. While foundation models exist for images, audio, and scientific data, LLMs focus on text. They are trained on vast corpora of human writing—books, articles, code, conversations.

Model acknowledges what this actually is: a computational system, a series of algorithms and parameters that processes input and produces output.

LLMs can handle remarkably diverse language tasks: answering questions, translating between languages, summarizing documents, writing code, analyzing sentiment, even creative writing. The same underlying model, with appropriate prompting or fine-tuning, can perform all these functions.

This versatility is the point. Foundation models are not narrow tools. They are general capabilities that can be directed toward specific purposes.

Beyond Language: The Multi-Modal Future

Foundation models extend far beyond text.

Vision models like DALL-E and Stable Diffusion generate images from text descriptions. They learn the relationship between visual concepts and language, enabling creation of images that never existed.

Scientific models tackle domain-specific problems. AlphaFold predicts protein structures—arguably the most significant AI breakthrough in biology. IBM’s MoLFormer accelerates molecule discovery for drug development.

Audio models generate speech, compose music, and translate between spoken languages. The “fake Drake” songs that circulate online come from audio foundation models.

Code models like GitHub Copilot autocomplete programs as developers type. They are trained on public code repositories and learn patterns of software construction.

IBM is building across all these domains: Watson for language, Maximo for vision, Project Wisdom for code, MoLFormer for chemistry, geospatial models for climate research.

The unifying principle: train once on broad data, then apply everywhere.

Generative AI: The Creative Layer

Generative AI is the capability that has captured public attention. It is not a separate technology but an application of foundation models.

If foundation models provide the underlying structure and knowledge, generative AI is what you do with that knowledge. It is the creative expression—the text, images, music, and code that emerges from the foundation.

This distinction matters for business strategy. Foundation models are infrastructure. They are the platform. Generative AI is the application layer—the user-facing products and services built on that platform.

Companies are making bets at both levels. Some are building foundation models (OpenAI, Google, Meta, IBM). Others are building applications on top of existing models (most startups). The infrastructure layer requires massive capital. The application layer requires insight into user needs and workflow integration.

The Trade-Offs: Power and Problems

Foundation models are not free lunches. They come with significant costs and risks that any serious implementation must address.

Compute costs are the most visible challenge. Training these models requires tens of thousands of GPUs running for months. Costs run into hundreds of millions of dollars. Even inference—running the trained model—is expensive at scale. A single GPT-4 query costs fractions of a penny, but millions of queries add up.

This creates a barrier to entry. Only well-capitalized organizations can train foundation models from scratch. Everyone else must use APIs or open-source models, creating dependencies on providers.

Trustworthiness is the deeper problem. Foundation models are trained on internet-scale data. That data contains biases, misinformation, hate speech, and toxic content. No team of human annotators can vet terabytes of training data. Often, we do not even know exactly what data was used.

The result: models that can generate plausible-sounding falsehoods, reflect societal biases, or produce harmful content when prompted. Addressing these issues—through careful training, filtering, and alignment techniques—is an active research area and business imperative.

IBM’s approach focuses on two dimensions: improving efficiency (reducing costs) and improving trustworthiness (reducing risks). This reflects enterprise priorities—businesses need AI that is both economically viable and reliably safe.

The Strategic Implications

For organizations deciding how to approach AI, the hierarchy provides a decision framework:

Traditional machine learning remains appropriate for structured data problems with clear patterns. Credit scoring, demand forecasting, quality control—these often do not need neural networks. Simpler models are faster, cheaper, and more interpretable.

Deep learning is necessary for unstructured data: images, audio, complex text. If you are building computer vision for manufacturing inspection or speech recognition for customer service, you need neural networks.

Foundation models become relevant when you need general capabilities that can be adapted to multiple tasks. Customer service chatbots, content generation, code assistance—these are natural fits. The economics favor foundation models when the alternative is training multiple task-specific systems.

Fine-tuning vs. prompting is a tactical choice. Fine-tuning adapts the model with task-specific data, updating parameters. It is more expensive but yields better performance for specific applications. Prompting frames tasks as text completion without updating the model. It is cheaper and faster but may be less precise.

The trend is toward larger, more capable foundation models accessed via APIs, with organizations focusing on application-layer value rather than infrastructure. This mirrors cloud computing’s evolution—few companies run their own data centers anymore.

Looking Forward

The shift from task-specific AI to foundation models represents a fundamental restructuring of how intelligence gets built and deployed. It is as significant as the shift from mainframes to personal computers, or from desktop software to cloud services.

The implications extend beyond technology. Foundation models concentrate capability. Organizations that can afford to train them gain advantages that are difficult to replicate. This creates power dynamics that societies will need to address.

At the same time, the adaptability of foundation models democratizes access to AI capabilities. Small teams can build sophisticated applications by leveraging pre-trained models. The barrier to entry for AI-powered products has never been lower.

Understanding this landscape—where we are in the hierarchy, what each level offers, what trade-offs each entails—is essential for anyone making decisions about AI. The technology is moving fast. The frameworks for thinking about it need to keep pace.

From ELIZA’s simple patterns to GPT-4’s nuanced understanding, the trajectory is clear: we are building systems that capture more of human knowledge and capability in trainable form. The foundation model paradigm is the current state of that journey. It will not be the final state. But it is the context within which the next advances will emerge.

The question for organizations is not whether to engage with this technology. It is how to engage strategically—where in the hierarchy to build, what trade-offs to accept, what value to create. The answers will determine who thrives in the AI-transformed economy that is already taking shape.

Related Reading

- AI & Tech News Roundup: April 14, 2026 — Latest developments in AI, including Meta’s $14B model and the Trump-Anthropic paradox

- NVIDIA ISING: The Open-Source AI Bridge to Practical Quantum Computing — How NVIDIA is bridging classical AI and quantum computing

- NVIDIA’s “Physical AI” Play — Teaching robots to think, talk, and actually DO things

- AI’s “Second Wind” — Why the market is shifting from hype to hard cash

- AI Week in Review — Weekly roundup of AI developments and market signals

Sources

- IBM Training: “Machine Learning vs. Deep Learning vs. Foundation Models”

- IBM Training: “Large Language Models (LLMs)” by Kate Soule

- Stanford Institute for Human-Centered Artificial Intelligence: Foundation Models