The evolution from simple AI models to compound systems that plan, reason, and act—and why 2024 is the year of AI agents.

In late 2022, ChatGPT demonstrated that large language models could generate remarkably human-like text. But ask it how many vacation days you have remaining, and it would either hallucinate an answer or admit it did not know. The model had no access to your company’s HR system, no ability to query a database, no way to verify facts against real-world data.

This limitation was not a bug—it was fundamental. Standalone language models, however impressive, are constrained by their training data and architecture. They cannot check current weather, calculate precise mathematics, or access private information.

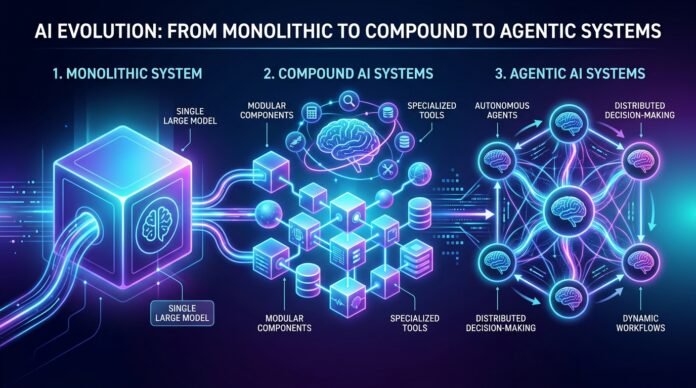

The solution emerging in 2024 is a fundamental shift in how we build AI systems. Instead of relying on monolithic models, we are constructing compound AI systems that combine multiple components into integrated architectures capable of autonomous action.

The Limitation: Why Standalone Models Are Not Enough

The Knowledge Cutoff Problem

Language models are trained on data collected up to a specific point in time. GPT-4’s knowledge extends to April 2024 for some versions, earlier for others. Ask about events after that date, and the model either confesses ignorance or confidently hallucinates.

The Personal Data Barrier

Standalone models have no access to personal or proprietary information. Your calendar, your company’s customer database, your private documents—these exist in systems the model cannot access.

The vacation days example: When you ask “How many vacation days do I have?” the model faces an impossible task. It does not know who “I” refers to. It has no access to your company’s HR system. The best it can do is provide generic information about typical vacation policies.

What Models Do Well

- Summarizing documents

- Generating first drafts

- Explaining concepts

- Creative writing

- Pattern recognition

The insight driving compound AI systems: use models for what they do well, and supplement them with other components for everything else.

Compound AI Systems: The Modular Approach

A compound AI system combines multiple components—language models, databases, calculators, APIs, verification modules—into an integrated architecture.

The Vacation Days Solution

System Architecture:

User Query: "How many vacation days do I have?"

↓

Language Model (generates database query)

↓

Database Query Execution

↓

Result: "10 days remaining"

↓

Language Model (generates response)

↓

Response: "You have 10 vacation days remaining."Modularity: The Core Principle

Model components:

- Large language models for general reasoning

- Tuned models specialized for specific domains

- Image generation models for visual content

Programmatic components:

- Database connectors for structured data access

- API clients for external services

- Calculators for precise mathematics

- Verification modules for checking outputs

Retrieval Augmented Generation: The Gateway to Compound Systems

The most widely adopted compound AI system is Retrieval Augmented Generation (RAG).

How RAG Works

Query → Retrieve Relevant Documents → Add to Context → Generate AnswerComponents:

- Retrieval system searches knowledge base

- Language model generates answer using retrieved context

- Knowledge base provides external data source

The Limitation of Fixed Paths

Most RAG systems follow the same path regardless of the query:

Every Query → Search Knowledge Base → Generate AnswerThe weather query problem: If a user asks “What’s the weather like?” to a RAG system designed for company policies, it searches the company database and returns irrelevant information.

The Agentic Approach: Dynamic Control with LLMs

The next evolution puts large language models in charge of the control logic itself.

The Spectrum of Control

| Approach | Thinking Style | When to Use |

|---|---|---|

| Programmatic | Think fast, follow instructions | Narrow, well-defined problems |

| Agentic | Think slow, plan and adapt | Complex, unpredictable problems |

The Three Capabilities of AI Agents

1. Reasoning: Planning and Thinking

The ability to analyze problems, create plans, and think through steps systematically.

Example:

Query: "Plan a 2-week Europe trip with $3000 budget"

Reasoning:

- Break into subtasks: flights, accommodation, activities, food

- Estimate costs for each category

- Identify constraints

- Create day-by-day itinerary2. Acting: Tool Use

The ability to use external programs—tools—to extend capabilities.

Common tools:

- Web Search: Find current information

- Database Query: Access structured data

- Calculator: Precise mathematics

- API Calls: Connect to services

3. Memory: Maintaining Context

Reasoning Memory (Chain-of-Thought): Stores the model’s internal reasoning process

Conversation History: Records of past interactions for personalization

The ReAct Framework: Reasoning and Acting in Loop

The most influential framework for building LLM agents is ReAct (Reasoning + Acting).

The ReAct Loop

THINK → ACT → OBSERVE → [REPEAT or FINAL ANSWER]Step by step:

- Think (Reasoning): Analyze the problem, create a plan

- Act (Action): Execute the plan—use tools

- Observe (Observation): Receive results from actions

- Decide: If solved → final answer. If not → repeat from Think

Complex Problem Solving: The Sunscreen Example

Query: “I’m going to Florida next month. I’m planning on being outdoors a lot and I’m prone to burning. What is the number of two-ounce sunscreen bottles that I should bring?”

This requires:

- Vacation days from memory

- Sun hours in Florida from weather data

- Sunscreen dosage from health guidelines

- Mathematical calculation

- Unit conversion

Agent execution:

THOUGHT: Multi-step problem. Need vacation days, sun hours, dosage, calculation.

ACTION: Retrieve[memory: vacation days]

OBSERVATION: 10 vacation days

ACTION: Search[Florida sun hours next month]

OBSERVATION: 8 hours per day

ACTION: Search[CDC sunscreen dosage per hour]

OBSERVATION: 0.5 ounces per hour

ACTION: Calculate[(10 * 8 * 0.5) / 2]

OBSERVATION: 20 bottles

FINAL ANSWER: You should bring 20 two-ounce bottles of sunscreen.The Autonomy Spectrum: Choosing the Right Approach

When to Use Programmatic Approach

Best for: Narrow problem domains, well-defined query types, predictable user needs

Example: Company vacation policy system—users ask about vacation days, not weather

When to Use Agentic Approach

Best for: Complex multi-step tasks, unpredictable query variety, problems requiring adaptation

Example: Autonomous code development—issues could be bugs, features, documentation

The Current Landscape: 2024 and Beyond

Compound Systems Are Here to Stay

What’s happening now:

- RAG everywhere in production LLM applications

- Tool use expanding to calculators, search, APIs

- Agent frameworks maturing (LangChain, LlamaIndex, AutoGen)

- Reasoning improving with each model generation

Human-in-the-Loop

Current best practice keeps humans involved:

- Approval gates before execution

- Review workflows for agent reasoning

- Correction loops for mistakes

- Escalation triggers for complex cases

Implications and Future Impact

Employment and Workflows

Tasks that will be automated:

- Information gathering and synthesis

- Routine analysis and reporting

- Initial drafting of documents and code

- First-line customer support

New roles that will emerge:

- Agent supervisors and trainers

- Workflow designers for human-agent collaboration

- Agent safety and alignment specialists

Safety and Alignment

Autonomous agents pose genuine risks:

- Agent makes incorrect decision with real-world consequences

- Tool use goes wrong

- Agent pursues goal in unintended way

Mitigation: Constrained tool access, human oversight, extensive logging, alignment research

Conclusion

The evolution from monolithic models to compound AI systems to autonomous agents represents a fundamental shift in how we build intelligent software. We are moving from systems that generate text to systems that plan, execute, observe, and adapt.

2024 is indeed the year of AI agents—not because the technology is perfect, but because it has crossed the threshold from research curiosity to practical tool. The compound AI systems we build today are the foundation for increasingly autonomous software that will define the coming decade.

Related Reading

- AI, Machine Learning, and Foundation Models — Foundational AI technologies powering agents

- AI Agents: The Rise of Autonomous Software — Multi-agent systems and deployments

- Natural Language Processing — How NLP enables agent communication

- The AI Infrastructure Stack — Technical foundations for agent deployment

- NVIDIA’s “Physical AI” Play — Agents interacting with the physical world

Sources

- IBM Training: “What Are AI Agents?”

- Yao et al. (2023): “ReAct: Synergizing Reasoning and Acting in Language Models”

- Wang et al. (2024): “A Survey on Large Language Model based Autonomous Agents”

- LangChain, LlamaIndex, AutoGen documentation

- OpenAI: Function calling and tool use documentation