From spam filters to ChatGPT—how NLP became the interface between humans and AI.

In 1950, Alan Turing proposed a test for machine intelligence: if a computer could converse naturally enough to fool a human, it would demonstrate genuine understanding. Seven decades later, we routinely interact with machines that pass increasingly sophisticated versions of this test—yet most users have no idea how they work.

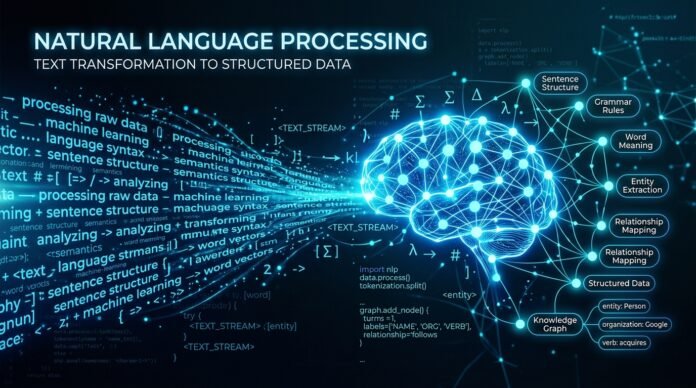

Natural Language Processing (NLP) is the technology that bridges human communication and machine computation. It is what lets you ask Siri about the weather, enables Google to understand your search queries, and powers the conversational abilities of ChatGPT. But NLP’s impact extends far beyond consumer convenience. It is transforming industries from healthcare to finance, enabling new forms of human-computer collaboration, and raising profound questions about language, meaning, and intelligence.

Understanding NLP matters for anyone building technology, making investment decisions, or navigating a world where language itself becomes a computational interface.

What Is NLP? The Translation Layer

At its core, NLP is about translation—not between languages (though it can do that), but between human expression and machine representation.

The Fundamental Problem

Humans communicate in unstructured text. We say things like “Add eggs and milk to my shopping list.” We understand this instantly. But to a computer, this is just a string of characters with no inherent meaning.

Unstructured text (human):

"Add eggs and milk to my shopping list"Structured representation (machine):

ShoppingList {

items: [

{name: "eggs", quantity: 1},

{name: "milk", quantity: 1}

],

action: "add"

}NLP sits between these two worlds, translating between them. This translation happens in two directions:

- Natural Language Understanding (NLU): Unstructured → Structured

- Natural Language Generation (NLG): Structured → Unstructured

Most practical applications focus on NLU—teaching computers to understand what humans mean. But the most sophisticated systems, like modern chatbots, do both: they understand your input and generate appropriate responses.

The NLP Toolkit: How It Actually Works

NLP is not a single algorithm. It is a collection of techniques applied in sequence to transform text into structured meaning. Think of it as a pipeline where each stage refines the understanding.

Stage 1: Tokenization—Breaking Text into Pieces

Before a computer can understand text, it needs to identify the basic units it is working with. Tokenization splits text into meaningful chunks.

Example:

Input: "Add eggs and milk to my shopping list"

Tokens: ["Add", "eggs", "and", "milk", "to", "my", "shopping", "list"]Simple in English, where words are separated by spaces. More complex in languages like Chinese or Japanese, where word boundaries are not marked. Modern tokenizers handle this complexity, often using subword units that capture meaningful fragments even in unfamiliar languages.

Stage 2: Normalization—Finding the Roots

Words have different forms. “Run,” “running,” “ran,” and “runs” all express the same core concept. Normalization reduces these variations to canonical forms.

Stemming uses rules to chop off prefixes and suffixes:

- running → run

- runs → run

- ran → run

Lemmatization uses dictionary definitions to find the root word:

- better → good (lemma) vs bet (stem)

- universal → universal (lemma) vs univers (stem)

The difference matters. Stemming is faster but can produce nonsense. Lemmatization is more accurate but requires linguistic knowledge. Modern systems often use both, choosing the right approach for the task.

Stage 3: Part-of-Speech Tagging—Understanding Grammar

Words change meaning based on their grammatical role. “Make” is a verb in “I will make dinner” but a noun in “What make is your laptop?”

Part-of-speech tagging identifies each token’s grammatical function:

- Nouns (objects, concepts)

- Verbs (actions, states)

- Adjectives (descriptions)

- Adverbs (modifiers)

- Prepositions (relationships)

This grammatical structure is essential for understanding meaning. A system that knows “make” is a verb in one context and a noun in another can parse sentences correctly.

Stage 4: Named Entity Recognition—Identifying the Players

Language refers to things in the world. Named Entity Recognition (NER) identifies what those things are:

- IBM → Organization

- Arizona → Location (US State)

- Martin Keen → Person

- 50 million → Monetary Value

- January 15, 2024 → Date

NER transforms abstract text into structured knowledge. It knows that “IBM” is a company, “Arizona” is a place, and “Martin Keen” is a person. This enables applications like extracting contact information from documents, tracking mentions of companies in news, or building knowledge graphs from text.

Stage 5: Dependency Parsing—Mapping Relationships

Words do not exist in isolation. They relate to each other in complex ways. Dependency parsing maps these relationships, revealing that eggs and milk are the items being added, and the shopping list is the destination.

Real-World Applications: Where NLP Works Today

These techniques combine to power applications that touch billions of users daily.

Machine Translation: Breaking Language Barriers

The classic demonstration of NLP’s power—and its challenges—is machine translation.

The problem: Word-for-word translation fails because meaning depends on context. The famous example: “The spirit is willing, but the flesh is weak” translated from English to Russian and back becomes “The vodka is good, but the meat is rotten.”

Modern solutions: Neural machine translation does not translate words. It encodes the meaning of the source sentence into a mathematical representation, then decodes that representation into the target language. This preserves context and handles idioms better than rule-based approaches.

Current capabilities:

- Google Translate handles 100+ languages with reasonable accuracy

- DeepL produces more natural-sounding translations for European languages

- Meta’s NLLB (No Language Left Behind) covers 200 languages

Virtual Assistants and Chatbots: Conversational Interfaces

Siri, Alexa, Google Assistant, and ChatGPT all rely on NLP to understand and respond to human language.

The architecture:

- Speech recognition converts audio to text (if voice input)

- Intent classification determines what the user wants

- Slot filling extracts specific parameters (dates, locations, names)

- Dialogue management maintains conversation context

- Response generation produces appropriate output

- Text-to-speech converts text to audio (if voice output)

Evolution of capabilities:

- 1st Generation (2011-2016): Command recognition — “Set timer for 5 minutes”

- 2nd Generation (2016-2022): Intent understanding — “What’s the weather like?”

- 3rd Generation (2022-present): Conversational reasoning — “Should I bring an umbrella today?”

Sentiment Analysis: Reading the Emotional Tone

Not all text is neutral. Sentiment analysis determines whether text expresses positive, negative, or neutral emotions—and often detects specific emotions like joy, anger, or sadness.

Applications:

- Brand monitoring: Tracking what customers say about companies on social media

- Product reviews: Aggregating opinions about features and quality

- Customer service: Prioritizing angry customers or identifying satisfaction trends

- Financial markets: Gauging investor sentiment from news and social media

Spam Detection: Filtering the Noise

One of NLP’s oldest applications remains ubiquitous: identifying unwanted email. Modern spam filters use machine learning trained on millions of labeled examples. They do not just look for keywords—they learn the statistical patterns that distinguish legitimate messages from spam.

Information Extraction: Turning Text into Data

Much of the world’s knowledge exists only in text—research papers, legal documents, medical records, news articles. Information extraction transforms this unstructured knowledge into structured databases.

Example applications:

- Medical coding: Extracting diagnoses and procedures from clinical notes

- Legal discovery: Finding relevant documents in massive case files

- Financial analysis: Extracting earnings data from quarterly reports

- Scientific research: Building knowledge graphs from academic papers

The 2023-2024 Revolution: Large Language Models

The most significant development in NLP history occurred with the emergence of large language models (LLMs) like GPT-4, Claude, and Llama. These models represent a paradigm shift in how machines process language.

The Transformer Architecture

The breakthrough came in 2017 with Google’s “Attention Is All You Need” paper introducing the transformer architecture. Unlike previous approaches that processed text sequentially, transformers process entire sequences simultaneously using “attention mechanisms” that weigh the importance of different words relative to each other.

Key innovation: When processing the word “bank” in “I sat by the river bank,” the attention mechanism recognizes that “river” is highly relevant and “financial” is not. This contextual understanding enables more accurate language processing.

Pre-training and Fine-tuning

Modern NLP systems are built in two stages:

Pre-training: The model learns general language understanding by predicting masked words in massive text corpora (billions of documents from the internet). This requires enormous computational resources but happens once.

Fine-tuning: The pre-trained model is adapted to specific tasks with relatively small amounts of labeled data. This is much cheaper and can be done by organizations without massive compute budgets.

Emergent Capabilities

As language models grew larger, they developed unexpected capabilities:

- In-context learning: They can learn new tasks from just a few examples in the prompt

- Chain-of-thought reasoning: They can work through problems step by step

- Code generation: They can write and explain computer programs

- Multilingual understanding: They can process dozens of languages without explicit training

Current Challenges and Limitations

Despite remarkable progress, NLP faces significant challenges.

The Meaning Problem

Do language models actually understand language, or do they just predict likely sequences of words? Current systems struggle with:

- Common sense reasoning: Understanding basic facts about the physical world

- Causal reasoning: Distinguishing correlation from causation

- Mathematical reasoning: Performing reliable calculations

- Truthfulness: Distinguishing fact from fiction in their training data

Bias and Fairness

Language models learn from human-generated text, which contains human biases. They have been shown to reproduce stereotypes about gender, race, and other characteristics.

Resource Requirements

Training large language models requires massive computational resources—millions of dollars in GPU time for the largest models. This creates barriers to entry and raises environmental concerns about energy consumption.

Hallucination

Language models sometimes generate plausible-sounding but false information. This “hallucination” problem is particularly concerning for applications like medical advice or legal information where accuracy is critical.

The Future of NLP

Several trends will shape NLP’s evolution over the coming years.

Multimodal Integration

Language does not exist in isolation. Future NLP systems will integrate speech, gestures, facial expressions, and images. OpenAI’s GPT-4V and Google’s Gemini already demonstrate vision-language capabilities.

Personalization and Memory

Current systems treat each interaction as independent. Future systems will maintain persistent memory across conversations and adapt to individual users.

Reasoning and Tool Use

The next generation of NLP systems will combine language understanding with explicit reasoning capabilities and the ability to use external tools like calculators, search engines, or APIs.

On-Device Processing

Privacy concerns and latency requirements are driving NLP toward edge computing. Apple’s Neural Engine and Qualcomm’s AI accelerators enable sophisticated language processing on smartphones without sending data to the cloud.

Strategic Implications

For organizations considering NLP investments, several principles guide effective deployment.

Start with Problems, Not Technology

NLP is a means to an end. The most successful implementations start with clear business problems and evaluate whether NLP is the right solution.

Good fits for NLP:

- Processing large volumes of text data

- Enabling natural language interfaces

- Extracting structured information from documents

- Analyzing sentiment and feedback at scale

Data Is the Differentiator

While foundation models provide baseline capabilities, proprietary data creates competitive advantage: domain-specific language, historical examples, and user feedback.

Human-in-the-Loop Design

For high-stakes applications, design systems that combine NLP automation with human oversight. NLP handles routine cases automatically; uncertain cases are escalated to humans.

Consider the Full Stack

NLP does not exist in isolation. Effective applications require data infrastructure, model serving, user interfaces, and monitoring systems.

Conclusion

Natural Language Processing has evolved from an academic curiosity to a foundational technology that touches billions of lives. The journey from simple spam filters to conversational AI capable of passing the Turing test represents one of the most significant achievements in computer science.

Yet we are still in the early stages. Current systems handle language with remarkable fluency but lack the deeper understanding that humans take for granted. The coming years will see continued progress toward systems that not only process language but truly comprehend it.

For practitioners, investors, and citizens, understanding NLP is essential. It is not just a technical domain—it is the interface through which humans and machines will increasingly interact, collaborate, and perhaps one day understand each other.

Related Reading

- AI, Machine Learning, and Foundation Models: A Practical Guide to the New Hierarchy — Understanding the AI hierarchy that powers modern NLP

- The Voice AI Revolution: How Speech Technology Is Reshaping Human-Computer Interaction — Speech recognition and synthesis technologies

- The Generative AI Toolkit: How Machines Learned to Create — Exploring AI’s creative capabilities

- AI’s “Second Wind”: Why the Market Is Shifting From Hype to Hard Cash — The business reality of AI deployment

- The Complete Beginner’s Guide to AI — Foundational AI concepts

Sources

- IBM Training: “What Is NLP (Natural Language Processing)?” by Martin Keen

- IBM Training: “Natural Language Processing, Speech, and Computer Vision”

- Vaswani et al. (2017): “Attention Is All You Need” — Transformer architecture

- Brown et al. (2020): “Language Models are Few-Shot Learners” — GPT-3

- OpenAI (2023): GPT-4 Technical Report

- Google Research: Neural Machine Translation

- Meta AI: No Language Left Behind (NLLB)