There is a version of AI that knows exactly who you are, what you already understand, what decisions you’ve made, what you’ve rejected, and what you’re working toward. It doesn’t explain things you already know. It doesn’t retrieve documents you’ve already processed. It doesn’t give you generic answers tuned for a median user who doesn’t exist.

That version of AI doesn’t ship yet — not fully. But the architecture to build it exists right now, assembled from pieces that are already in production. And the gap between “what AI does today” and “what personalised AI will do tomorrow” is the most underexplored performance differential in the entire field.

This is a breakdown of that architecture: what’s wrong with current approaches, why the hybrid answer is the right one, and what the three-year outcome looks like when it lands.

What RAG Gets Wrong (And What It Gets Right)

Retrieval-Augmented Generation — RAG — is the current dominant paradigm for making AI systems useful on specific knowledge. The mechanics are straightforward: when a user asks a question, the system retrieves relevant chunks from a knowledge base (documents, notes, emails, databases), passes those chunks to a language model as context, and the model generates a response grounded in retrieved material rather than just its training data.

This is a genuine improvement over pure language model prompting. RAG systems are grounded. They can cite sources. They stay current when the knowledge base updates. They don’t hallucinate facts they can look up.

But current RAG systems have a structural blindspot: they are stateless. Every query starts from zero. The system finds documents relevant to the words in your query. It has no model of who you are, what you already know, what decisions you’ve made, or where you are in a project. It treats every user identically.

Ask a generic RAG system “what should I do about my Bitcoin position?” and it will retrieve general information about Bitcoin, perhaps some market analysis, and generate a reasonable generic answer. It won’t know that you’ve already decided on a self-custody strategy, that you’ve rejected exchange-held assets, that you’ve been tracking a specific technical level for three months, and that you’re waiting for a particular macro trigger before acting.

That context gap is the problem. And it compounds: the more sophisticated your thinking, the worse generic RAG serves you — because the delta between your actual context and the median user context grows with expertise.

💡 Key insight: The more expert you become, the worse generic AI serves you. Personalisation value scales with user sophistication.

What Agentic AI Gets Wrong (And What It Gets Right)

The other dominant paradigm right now is agentic AI — systems that don’t just answer questions but take sequences of actions to accomplish goals. An agent can search the web, write and run code, send emails, update databases, and coordinate with other agents. It acts rather than just responds.

The upside is real. Agentic systems can handle multi-step workflows that would require dozens of individual prompts from a human. They can work in parallel, operate overnight, and do work that pure chat interfaces can’t touch. The 2026 AI agent stack is genuinely useful in ways that weren’t possible 18 months ago.

But agents without personal grounding drift. They make decisions that are locally reasonable but globally wrong for a specific user. They take generic approaches when a personalised approach is needed. Without persistent knowledge of who they’re acting for — their values, their constraints, their existing commitments — agents are powerful tools pointed in approximately the right direction.

“Approximately right” is not good enough when agents are spending money, sending communications, or making commitments on your behalf.

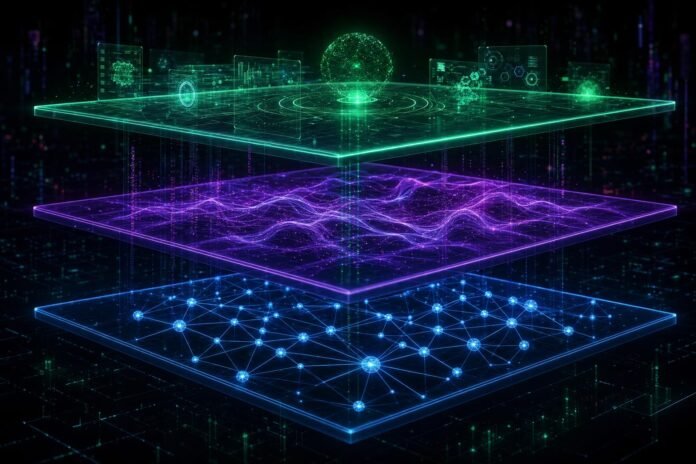

The Three-Layer Architecture That Fixes Both

The solution isn’t better RAG or better agents in isolation. It’s a hybrid architecture that combines three distinct capabilities, each addressing a different failure mode.

Layer 1: Personal Knowledge Graph

The foundation isn’t a flat document store — it’s a structured knowledge graph that captures relationships between ideas, not just the ideas themselves.

Current RAG splits documents into chunks and embeds them as vectors. When you query, it finds the chunks whose embeddings are closest to your query embedding. This works for finding relevant documents but loses the connective tissue: the fact that a signal you captured in January connects to a thesis you developed in March, which informs a decision you’re making now.

A proper personal knowledge graph stores nodes (concepts, decisions, signals, people, projects) and edges (the relationships between them). When you query, you don’t just retrieve relevant chunks — you retrieve a subgraph: the relevant cluster of connected knowledge that gives the AI genuine context for your situation.

Tools like Obsidian with its graph view are a primitive version of this. The next generation adds vector search on top of the graph structure — you get semantic retrieval plus structural relationships. That combination is far more powerful than either alone.

Layer 2: Personal Memory Layer

The second layer is distinct from the knowledge graph and more important: a structured model of who you are, not just what you know.

This includes:

- Working theories — the hypotheses you’re currently operating under (“infrastructure constraints are the real AI bottleneck, not model capability”)

- Committed decisions — choices you’ve made and don’t need to revisit (“self-custody only, no exchange holdings”)

- Rejected options — things you’ve considered and ruled out, with reasons

- Communication style — how you prefer information delivered, what level of detail you want, what you find useful vs. noise

- Active projects and their current state — where you actually are, not where a fresh context thinks you might be

This is what OpenAI’s Memory feature and Claude’s Projects are attempting to build at the product level. Early results are measurable: users with persistent memory enabled report meaningfully higher answer relevance on personalised tasks. But these implementations are cloud-hosted, owned by the provider, and only partially structured.

The owned version — a local memory layer you control, that updates with every interaction, that no provider can access or train on — is the defensible version. It’s also the version that compounds over time into a genuine competitive moat.

Layer 3: Agentic Execution with Personal Context Injection

The third layer is where the action happens. Agents become genuinely useful when every task brief is pre-loaded with both the knowledge graph context (what’s relevant to this task) and the memory layer context (who is this person, what are their priors, how do they work).

Instead of an agent that asks “what should I research?” — it knows you’ve already tracked HBM supply constraints for three months, understands your framework for evaluating commodity plays, and picks up exactly where you left off. It acts as an extension of your existing thinking, not as a fresh analyst starting from scratch.

The compound effect of all three layers working together:

- Layer 1 provides accurate, relationship-aware retrieval

- Layer 2 provides user-specific priors and decision context

- Layer 3 executes with both layers as grounding

And critically: every execution feeds back. The agent’s output — the decision made, the research completed, the action taken — updates both the knowledge graph and the memory layer. The system improves with every use. Generic AI does not do this.

Also worth reading: Our analysis of what happens when AI agents start spending real money autonomously — which is exactly where this architecture leads.

The Feedback Loop Is the Moat

Most discussions of personalised AI focus on the retrieval quality: how accurately can the system find relevant information? That’s the wrong frame. Retrieval quality is a solved problem at scale; it’s a commodity improvement that every system will have.

The real moat is the feedback loop.

Consider two users who both start using a personalised AI system on the same day. User A uses it actively — every significant decision, every piece of research, every project update gets captured. After six months, their system has a rich model of their thinking, their domain expertise, their working theories, their decision patterns.

User B uses it passively — occasional queries, no active knowledge capture. After six months, their system is barely better than day one.

The gap between these users widens monotonically. The system that knows you compounds. The system that doesn’t stays flat. This is fundamentally different from generic AI, where both users get the same quality of response regardless of their history with the system.

That compounding is the moat. And it’s a moat that belongs to the user, not the provider — if the system is built correctly. Which brings up the most important architectural decision in this space.

The Ownership Question: Cloud vs. Local

Every major AI provider is building personalisation features. OpenAI Memory, Claude Projects, Google’s personalised Gemini, Microsoft Copilot with user context. They all work, to varying degrees. They all have the same structural problem: your personal context is owned by the provider.

Your knowledge graph, your memory layer, your decision history, your working theories — all of it sits on their servers, trained into their systems, used to improve their models. The moat you build belongs to them as much as to you.

The alternative is local-first personalised AI: your knowledge graph and memory layer run on your hardware, your personal context never leaves your machine, and the AI model is called via API or runs locally without seeing your raw data.

Apple silicon — specifically the M4 chip — makes this viable at consumer price points for the first time. A Mac Mini M4 has enough unified memory and Neural Engine throughput to run meaningful local inference alongside a vector database and knowledge graph. The infrastructure for owned personal AI exists at $599.

The privacy-performance tradeoff is real but narrowing. Local inference on M4 is slower than GPT-4o API calls. Local embedding models are less capable than OpenAI’s best. But the gap is closing every six months, and the ownership advantage — particularly for professional and financial contexts where data privacy is material — is permanent.

The Contrarian Case: Fine-Tuning Over RAG

There is a serious argument that the right long-term answer isn’t RAG plus memory at all — it’s fine-tuning. Instead of retrieving personal context at inference time, you bake it directly into model weights: a LoRA adapter trained on your writing, your decisions, your domain expertise. The model doesn’t look up what you know; it embodies it.

The case for fine-tuning is real: faster inference (no retrieval step), deeper integration of personal style and reasoning patterns, no retrieval failures. A fine-tuned model doesn’t miss relevant context because it didn’t match a vector similarity threshold — it just knows.

The case against, for now: fine-tuning requires more high-quality personal data than most users have generated. It goes stale — the model baked with your January thinking doesn’t know what you learned in April. And it’s expensive to update; you can’t fine-tune after every conversation the way you can update a knowledge graph.

The three-year answer is probably a hybrid of all three approaches:

- Fine-tuning for stable personal style and domain expertise (update quarterly)

- RAG with knowledge graph for current knowledge and recent research (update continuously)

- Memory layer for active decisions, projects, and priors (update per session)

Each layer handles a different timescale of personal context. Together, they produce an AI that genuinely knows you — not just your words, but your thinking.

Where This Is Going

The trajectory is clear. Generic AI is already commoditising — the performance difference between top-tier models is narrowing, and price competition is intense. The next differentiation layer isn’t model capability; it’s personalisation depth.

The users and organisations that invest now in structured personal knowledge graphs — capturing decisions, signals, working theories, and project state in structured, queryable form — are building the substrate that personalised AI needs. The ones who don’t will be using generic AI tools while their counterparts operate with systems that know their domain as well as they do.

The question isn’t whether personalised AI outperforms generic AI. It demonstrably does, even with today’s primitive implementations. The question is who owns the personal context layer — you, or the platform.

Build the knowledge graph. Own the data. The compound returns start day one.

Related Reading

- Vector Databases for RAG: From Chroma to Production — The infrastructure layer for personal knowledge graphs

- What Are AI Agents? From Monolithic Models to Autonomous Systems — How agents work and why personalisation changes the calculus

- The Invisible OS: How AI Agents Are Becoming the New Operating System — The broader shift agents are driving across software

- The 2026 AI Agent Stack: What’s Actually Working — Current state of agentic tooling

Sources

- Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks — Lewis et al., Facebook AI Research (original RAG paper)

- Memory and New Controls for ChatGPT — OpenAI, 2024

- Claude Projects — Anthropic, 2024

- Obsidian — Personal Knowledge Management — Obsidian.md

- Oxford Nanopore + Apple M4 Partnership — Oxford Nanopore Technologies (for Apple silicon local inference context)

- LoRA: Low-Rank Adaptation of Large Language Models — Hu et al., Microsoft Research

- What Is a Knowledge Graph? — Weaviate

- Knowledge Graph + RAG: Better Together — Neo4j

- Mac Mini M4 Specifications — Apple

- Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection — Asai et al.

- RAG vs Fine-Tuning: Pipelines, Tradeoffs, and a Case Study on Agriculture — Ovadia et al.

- LLM Powered Autonomous Agents — Lilian Weng, OpenAI