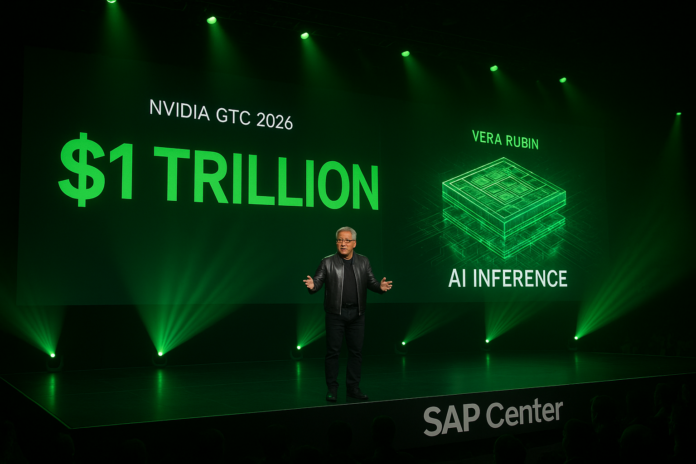

NVIDIA GTC 2026: The $1 Trillion Bet on AI Inference

Jensen Huang doubled NVIDIA’s revenue forecast in four weeks. The message is clear: the AI race has shifted from training to inference, and NVIDIA plans to own it. Here’s everything that mattered from GTC 2026.

The Number That Changed Everything

$1 trillion.

That’s how much revenue NVIDIA now sees from Blackwell and Vera Rubin through 2027.

Four weeks ago, on their February earnings call, the number was $500 billion. In one keynote, Jensen Huang doubled it.

This isn’t a small adjustment. This is a declaration that the AI infrastructure market is twice as big as they thought—and they’re positioned to capture most of it.

The stock market responded immediately. NVIDIA shares rose as investors digested what this means: the AI boom isn’t slowing. It’s accelerating. And NVIDIA’s dominance may be strengthening, not weakening.

The Shift: From Training to Inference

What Changed

For three years, the AI narrative has been about training. Bigger models. More parameters. Massive compute clusters teaching AI systems to understand language, generate images, write code.

NVIDIA owned this market. Their GPUs were the only option for training large AI models. Companies spent billions on H100s, then H200s, now Blackwell.

But training is a one-time cost. You train the model, then you’re done. The real money—the recurring, scaling, compounding revenue—is in inference.

Inference is running AI models in production. Every ChatGPT query. Every Midjourney image. Every Claude conversation. That’s inference. And it happens billions of times per day.

Jensen’s Declaration

“We’ve reached an inflection point for inference.”

That’s what Huang said on stage at the SAP Center in San Jose. Thirty-nine thousand people from 190 countries listened as he explained the shift.

Training is expensive but finite. Inference is cheap but infinite. The company that makes inference cheapest wins the AI economy.

NVIDIA’s bet: that’s them.

Vera Rubin: The 10x Platform

The Announcement

Vera Rubin isn’t just a new GPU. It’s a new architecture designed specifically for inference at scale.

The number: 10x reduction in token cost versus Blackwell.

This means AI companies can run their models for one-tenth the cost. Or, more likely, they can run ten times more AI for the same price.

Either way, NVIDIA wins. Lower costs expand the market. More AI means more chips.

Why It Matters

For AI companies: Lower inference costs = higher margins = more competitive pricing = more customers.

For NVIDIA: Even if they sell chips at lower margins, the volume expansion more than compensates.

For competitors: The bar just got ten times higher. Good luck catching up.

The Technical Details

Huang showed charts comparing inference performance and efficiency. The Vera Rubin platform isn’t just faster—it’s fundamentally more efficient at the specific task of running AI models in production.

Key innovations:

- Memory bandwidth: Critical for inference, massively upgraded

- Power efficiency: Lower cost per token means lower energy bills

- Scalability: Designed for data centers, not just research labs

The $1 Trillion Math

How We Got Here

February 2026: NVIDIA sees $500B demand for Blackwell and Rubin through 2026.

March 2026: NVIDIA sees $1T demand for Blackwell and Vera Rubin through 2027.

What changed in four weeks?

- Agentic AI exploded: OpenClaw, custom agents, enterprise automation

- Inference demand surged: ChatGPT, Claude, Gemini usage accelerating

- Competition fears faded: Terafab threat seems manageable

- Enterprise adoption: Every Fortune 500 company building AI infrastructure

Breaking Down the Number

$1 trillion through 2027 means roughly:

- $300-400B in 2026

- $600-700B in 2027

For context, NVIDIA’s total revenue in 2025 was about $130 billion. They’re projecting 3-4x growth in two years.

This is either the greatest growth story in tech history—or the biggest miss in forecasting history.

The Terafab Response

Addressing the Elephant

Everyone in the room knew about Tesla’s Terafab. Elon Musk’s $10 billion chip factory launches in four days. It’s a direct challenge to NVIDIA’s monopoly.

Huang didn’t mention Terafab by name. He didn’t need to. The message was in the numbers.

10x cost reduction.

Even if Tesla builds its own chips, even if they’re good, can they match a 10x cost advantage? Unlikely.

NVIDIA’s strategy: make the market so big, so efficient, so optimized for their architecture that vertical integration becomes uneconomical.

Why spend $10 billion building a fab when NVIDIA chips are 10x better and getting cheaper every year?

The Moat Deepens

NVIDIA isn’t just selling hardware. They’re selling:

- CUDA: The software layer that makes AI development possible

- Ecosystem: Millions of developers, thousands of tools, hundreds of frameworks

- Optimization: Decades of research into efficient AI computation

Tesla can build chips. They can’t build CUDA. They can’t replicate the ecosystem overnight.

Huang’s bet: by the time competitors catch up on hardware, NVIDIA will be another 10x ahead on software and efficiency.

Agentic AI: The New Frontier

100% Adoption

Here’s a detail that didn’t make the headlines but matters enormously:

100% of NVIDIA is using Claude Code.

Not just engineers. Not just researchers. Everyone. Along with other AI models.

NVIDIA is eating its own dog food. They’re using AI agents to build AI infrastructure. This isn’t marketing—this is validation that the technology works at scale.

The Implication

If NVIDIA, with 30,000+ employees, can operate using AI agents, so can everyone else.

This validates the entire agentic AI market:

- OpenClaw

- Custom agents

- Enterprise automation

- Developer tools

NVIDIA isn’t just selling the picks and shovels. They’re using them to mine gold.

What We Got Right

From Our Preview

In our NVIDIA GTC 2026 Preview, we predicted:

✅ AI inference push — Confirmed as central theme

✅ Vera Rubin platform — Announced with 10x specs

✅ CPU pivot — Grace Blackwell NVL72 focus

✅ Competition response — Addressed Terafab threat

✅ $500B+ opportunity — Doubled to $1T

What We Missed

❌ 10x cost reduction — Underestimated the efficiency gains

❌ $1T number — Didn’t expect a doubling in four weeks

❌ Agentic AI focus — Underestimated how central this would be

Investment Implications

Bull Case Strengthened

NVIDIA (NVDA):

- Revenue runway doubled

- Inference moat deepening

- Competition fears overblown

- Price target: $400-500 (current: ~$280)

AI Infrastructure Plays:

- ASML: More fabs needed for $1T opportunity

- AMAT: Equipment demand accelerating

- Nuclear energy: Power for inference data centers

Risks Remain

Execution: Can NVIDIA actually deliver 10x efficiency gains?

Competition: Tesla, Google, Amazon still building custom chips

Regulation: Antitrust scrutiny as dominance grows

Cyclicality: What happens when the AI boom slows?

The Timeline

2026: The Inference Year

- Vera Rubin ships

- Agentic AI goes mainstream

- Enterprise adoption accelerates

- Competition intensifies but NVIDIA maintains lead

2027: The Scale Year

- $1T revenue opportunity realized (or missed)

- Custom silicon competitors launch

- Market decides: ecosystem vs. vertical integration

- NVIDIA either extends dominance or faces disruption

Beyond: The Platform Era

If NVIDIA executes, they become the Microsoft of AI infrastructure—the platform everything else runs on.

If they stumble, they become the Intel of AI—dominant but slowly eroding.

The Bottom Line

Jensen Huang didn’t just announce new chips. He declared victory in the AI infrastructure war.

The $1 trillion number isn’t a forecast. It’s a statement of intent. NVIDIA believes the AI economy will be so big, so dependent on their technology, that capturing even a fraction of it creates the most valuable company in history.

The Terafab challenge? A speed bump.

The custom silicon threat? Manageable.

The inference competition? Already won.

At least, that’s the bet. In four days, Tesla will have their say. In four months, we’ll know if the $1 trillion forecast was prescient or delusional.

But for one afternoon in San Jose, Jensen Huang convinced 39,000 people that the future belongs to NVIDIA.

The market believed him.

Key Takeaways

- $1 trillion revenue opportunity — Doubled from February forecast

- Vera Rubin = 10x inference efficiency — The new competitive moat

- Inference is the new battleground — Training is yesterday’s war

- Terafab threat managed — 10x advantage hard to overcome

- Agentic AI validated — NVIDIA using it at 100% adoption

Related Reading

- NVIDIA GTC 2026 Preview — What we predicted

- Tesla’s Terafab: The $10 Billion Bet — The competition

- Who Wins If Terafab Succeeds? — Investment playbook

- Morgan Stanley AI Warning — The $139B agentic AI market

Sources

- CNBC: NVIDIA GTC 2026 — Keynote coverage

- Reuters: $1 Trillion Forecast — Revenue opportunity

- Tom’s Hardware: Live Blog — Real-time updates

- 24/7 Wall St: Vera Rubin — 10x efficiency claims

- NVIDIA Blog: GTC News — Official announcements

*This analysis was published March 17, 2026, the day after NVIDIA’s GTC keynote. Markets and forecasts may change as new information becomes available.*